Let’s be honest. Your Kubernetes cluster, on its bad days, feels less like a sleek, futuristic platform and more like a chaotic shared apartment right after college. The frontend team is “borrowing” CPU from the backend team, the analytics project left its sensitive data lying around in a public bucket, and nobody knows who finished the last of the memory reserves.

You tried to bring order. You dutifully handed out digital rooms to each team using namespaces. For a while, there was peace. But then those teams had their own little sub-projects, staging, testing, that weird experimental feature no one talks about, and your once-flat world devolved into a sprawling city with no zoning laws. The shenanigans continued, just inside slightly smaller boxes.

What you need isn’t more rules scribbled on a whiteboard. You need a family tree. It’s time to introduce some much-needed parental supervision into your cluster. It’s time for Hierarchical Namespaces.

The origin of the namespace rebellion

In the beginning, Kubernetes gave us namespaces, and they were good. The goal was simple: create virtual walls to stop teams from stealing each other’s lunch (metaphorically speaking, of course). Each namespace was its own isolated island, a sovereign nation with its own rules. This “flat earth” model worked beautifully… until it didn’t.

As organizations scaled, their clusters turned into bustling archipelagos of hundreds of namespaces. Managing them felt like being an air traffic controller for a fleet of paper airplanes in a hurricane. Teams realized that a flat structure was basically a free-for-all party where every guest could raid the fridge, as long as they stayed in their designated room. There was no easy way to apply a single rule, like a network policy or a set of permissions, to a group of related namespaces. The result was a maddening copy-paste-a-thon of YAML files, a breeding ground for configuration drift and human error.

The community needed a way to group these islands, to draw continents. And so, the Hierarchical Namespace Controller (HNC) was born, bringing a simple, powerful concept to the table: namespaces can have parents.

What this parenting gig gets you

Adopting a hierarchical structure isn’t just about satisfying your inner control freak. It comes with some genuinely fantastic perks that make cluster management feel less like herding cats.

- The “Because I said so” principle: This is the magic of policy inheritance. Any Role, RoleBinding, or NetworkPolicy you apply to a parent namespace automatically cascades down to all its children and their children, and so on. It’s the parenting dream: set a rule once, and watch it magically apply to everyone. No more duplicating RBAC roles for the dev, staging, and testing environments of the same application.

- The family budget: You can set a resource quota on a parent namespace, and it becomes the total budget for that entire branch of the family tree. For instance, team-alpha gets 100 CPU cores in total. Their dev and qa children can squabble over that allowance, but together, they can’t exceed it. It’s like giving your kids a shared credit card instead of a blank check.

- Delegated authority: You can make a developer an admin of a “team” namespace. Thanks to inheritance, they automatically become an admin of all the sub-namespaces under it. They get the freedom to manage their own little kingdoms (staging, testing, feature-x) without needing to ping a cluster-admin for every little thing. You’re teaching them responsibility (while keeping the master keys to the kingdom, of course).

Let’s wrangle some namespaces

Convinced? I thought so. The good news is that bringing this parental authority to your cluster isn’t just a fantasy. Let’s roll up our sleeves and see how it works.

Step 0: Install the enforcer

Before we can start laying down the law, we need to invite the enforcer. The Hierarchical Namespace Controller (HNC) doesn’t come built-in with Kubernetes. You have to install it first.

You can typically install the latest version with a single kubectl command:

kubectl apply -f [https://github.com/kubernetes-sigs/hierarchical-namespaces/releases/latest/download/hnc-manager.yaml](https://github.com/kubernetes-sigs/hierarchical-namespaces/releases/latest/download/hnc-manager.yaml)Wait a minute for the controller to be up and running in its own hnc-system namespace. Once it’s ready, you’ll have a new superpower: the kubectl hns plugin.

Step 1: Create the parent namespace

First, let’s create a top-level namespace for a project. We’ll call it project-phoenix. This will be our proud parent.

kubectl create namespace project-phoenixStep 2: Create some children

Now, let’s give project-phoenix a couple of children: staging and testing. Wait, what’s that hns command? That’s not your standard kubectl. That’s the magic wand the HNC just gave you. You’re telling it to create a staging namespace and neatly tuck it under its parent.

kubectl hns create staging -n project-phoenix

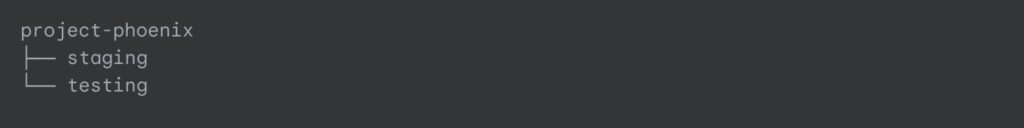

kubectl hns create testing -n project-phoenixStep 3: Admire your family tree

To see your beautiful new hierarchy in all its glory, you can ask HNC to draw you a picture.

kubectl hns tree project-phoenixYou’ll get a satisfyingly clean ASCII art diagram of your new family structure:

You can even create grandchildren. Let’s give the staging namespace its own child for a specific feature branch.

kubectl hns create feature-login-v2 -n staging

kubectl hns tree project-phoenixAnd now your tree looks even more impressive:

Step 4 Witness the magic of inheritance

Let’s prove that this isn’t all smoke and mirrors. We’ll create a Role in the parent namespace that allows viewing Pods.

# viewer-role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: pod-viewer

namespace: project-phoenix

rules:

- apiGroups: [""]

resources: ["pods"]

verbs: ["get", "list", "watch"]Apply it:

kubectl apply -f viewer-role.yamlNow, let’s give a user, let’s call her jane.doe, that role in the parent namespace.

kubectl create rolebinding jane-viewer --role=pod-viewer --user=jane.doe -n project-phoenixHere’s the kicker. Even though we only granted Jane permission in project-phoenix, she can now magically view pods in the staging and feature-login-v2 namespaces as well.

# This command would work for Jane!

kubectl auth can-i get pods -n staging --as=jane.doe

# YES

# And even in the grandchild namespace!

kubectl auth can-i get pods -n feature-login-v2 --as=jane.doe

# YESNo copy-pasting required. The HNC saw the binding in the parent and automatically propagated it down the entire tree. That’s the power of parenting.

A word of caution from a fellow parent

As with real parenting, this new power comes with its own set of challenges. It’s not a silver bullet, and you should be aware of a few things before you go building a ten-level deep namespace dynasty.

- Complexity can creep in: A deep, sprawling tree of namespaces can become its own kind of nightmare to debug. Who has access to what? Which quota is affecting this pod? Keep your hierarchy logical and as flat as you can get away with. Just because you can create a great-great-great-grandchild namespace doesn’t mean you should.

- Performance is not free: The HNC is incredibly efficient, but propagating policies across thousands of namespaces does have a cost. For most clusters, it’s negligible. For mega-clusters, it’s something to monitor.

- Not everyone obeys the parents: Most core Kubernetes resources (RBAC, Network Policies, Resource Quotas) play nicely with HNC. But not all third-party tools or custom controllers are hierarchy-aware. They might only see the flat world, so always test your specific tools.

Go forth and organize

Hierarchical Namespaces are the organizational equivalent of finally buying drawer dividers for that one kitchen drawer, you know the one. The one where the whisk is tangled with the batteries and a single, mysterious key. They transform your cluster from a chaotic free-for-all into a structured, manageable hierarchy that actually reflects how your organization works. It’s about letting you set rules with confidence and delegate with ease.

So go ahead, embrace your inner cluster parent. Bring some order to the digital chaos. Your future self, the one who isn’t spending a Friday night debugging a rogue pod in the wrong environment, will thank you. Just don’t be surprised when your newly organized child namespaces start acting like teenagers, asking for the production Wi-Fi password or, heaven forbid, the keys to the cluster-admin car.After all, with great power comes great responsibility… and a much, much cleaner kubectl get ns output.