The internet has many glamorous job titles. Cloud architect. Platform engineer. Security specialist. Site reliability engineer, which sounds like someone hired to keep civilization from sliding gently into a ditch.

DNS has none of that glamour.

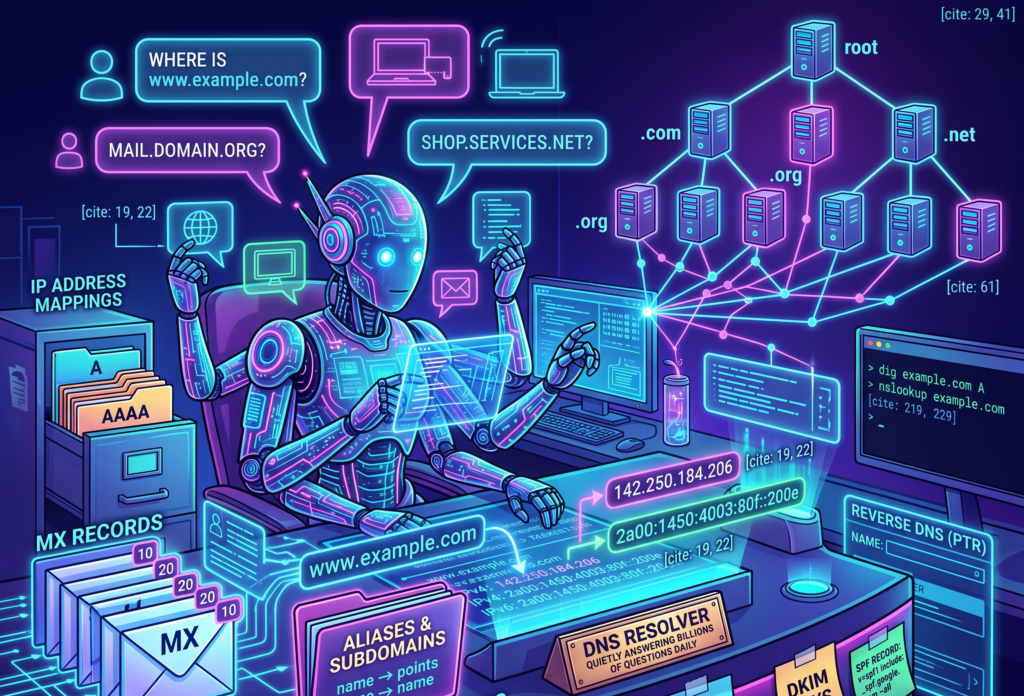

DNS is the receptionist sitting at the front desk of the internet, quietly answering the same question billions of times a day.

Where is this thing?

You type a name like “www.example.com”. Your browser nods with confidence, like a waiter who has written nothing down, and somehow a website appears. Behind that small miracle is DNS, the Domain Name System, a distributed naming system that turns human-friendly names into machine-friendly addresses.

Humans like names. Computers prefer numbers. This is one of the many reasons computers are not invited to dinner parties.

Without DNS, using the internet would feel like trying to visit every shop in town by memorizing its tax identification number. Possible, perhaps, but only for people who alphabetize their spice rack and have strong opinions about subnet masks.

DNS lets us type names instead of IP addresses. It maps domain names to the information needed to reach services, send email, verify ownership, issue certificates, and keep many small pieces of infrastructure from wandering into traffic.

It is boring in the way plumbing is boring. Nobody praises it when it works. Everybody becomes a philosopher when it breaks.

Why DNS exists

When you visit a website, your browser needs to know where that website lives. The name “google.com” is useful to you, but it is not directly useful to the machines moving packets across networks.

Those machines need IP addresses.

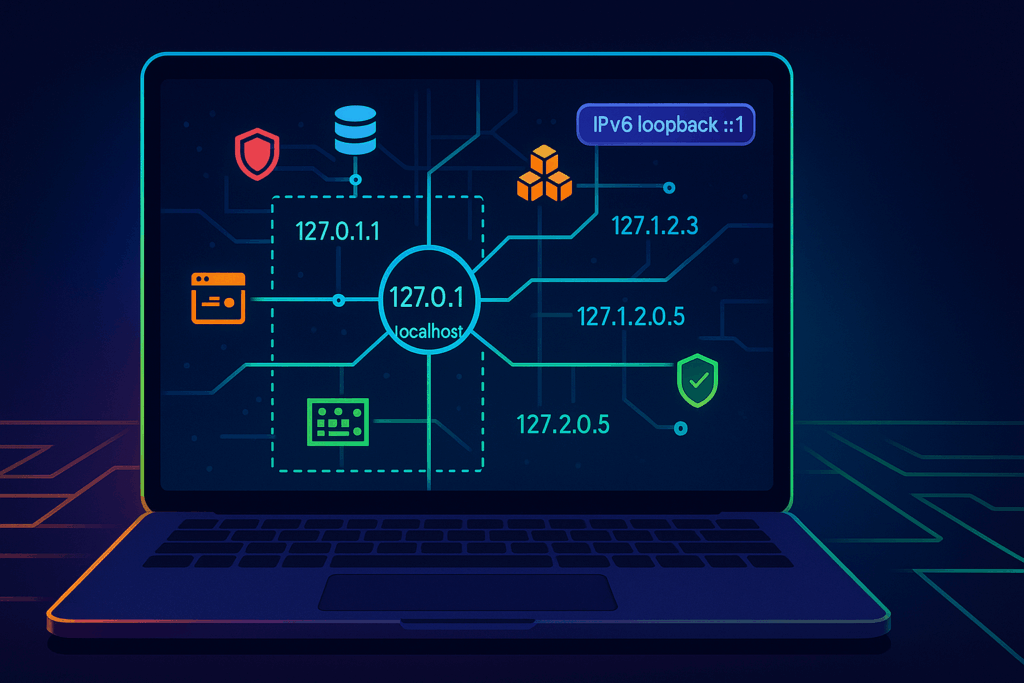

An IPv4 address looks like this.

142.250.184.206An IPv6 address looks like this.

2a00:1450:4003:80f::200eIPv6 addresses are what happens when a numbering system grows up, gets a mortgage, and decides readability is no longer its problem.

The basic job of DNS is to answer questions such as this.

What IP address should I use for www.example.com?And then DNS replies with an answer such as this.

www.example.com -> 93.184.216.34That is the simple version. It is true enough to be useful, but not complete enough to explain why DNS can ruin your afternoon while wearing the innocent expression of a houseplant.

The more accurate version is that DNS is a distributed, hierarchical, cached database. No single server knows everything. Instead, different parts of the DNS system know different parts of the answer, and resolvers know how to ask the right questions in the right order.

The internet’s receptionist does not keep every phone number in one drawer. That would be madness, and also suspiciously like a spreadsheet someone named Martin promised to maintain in 2017.

What happens when you type a domain name

When you type a website address into your browser, your machine does not immediately interrogate the entire internet. It starts closer to home, because even computers understand that walking across the office to ask a question is embarrassing if the answer was already on your desk.

A simplified DNS lookup usually works like this.

- The browser checks whether it already knows the answer.

- The operating system checks its own DNS cache.

- The request may go to your router, corporate DNS, ISP resolver, or a public resolver such as Google DNS or Cloudflare DNS.

- If the resolver does not already have the answer cached, it starts asking the DNS hierarchy.

- It asks the root DNS servers where to find the servers for the top-level domain, such as .com.

- It asks the .com servers where to find the authoritative nameservers for the domain.

- It asks the authoritative nameserver for the actual record.

- The IP address comes back.

- Your browser connects to the server.

- The website loads, assuming the rest of the internet has decided to behave.

This process often happens in milliseconds. It is quick enough to look like magic and structured enough to be bureaucracy.

That distinction matters.

Magic cannot be debugged. Bureaucracy can, provided you know which desk lost the form.

Recursive resolvers and authoritative nameservers

Two DNS roles are worth understanding early, because they explain a lot of real-world behavior.

The first is the recursive resolver.

This is the DNS server your device asks for help. It does the legwork. Your laptop says, “Where is www.example.com?” and the recursive resolver goes off to find the answer. It may already know the answer from cache, or it may need to ask other DNS servers.

The recursive resolver is the intern sent across the building with a clipboard and mild panic.

The second is the authoritative nameserver.

This is the DNS server that holds the official answer for a domain or zone. If a domain uses a particular DNS provider, such as Route 53, Cloud DNS, Cloudflare, or another provider, that provider’s authoritative nameservers are responsible for answering questions about the records configured there.

The authoritative nameserver is the person with the spreadsheet, the badge, and the unsettling confidence.

This difference matters because your laptop usually does not ask the authoritative nameserver directly. It asks a resolver. The resolver may answer from cache. That is why one person sees the new DNS record and another person, in the same meeting, sees the old one and begins quietly questioning reality.

DNS records are tiny instructions with large consequences

A DNS record is a piece of information stored in a DNS zone. It tells DNS what should happen when someone asks about a name.

A domain without DNS records is like an office building with no signs, no mailbox, no receptionist, and one confused courier holding your production traffic.

DNS records decide things like these.

- Which IP address serves a website

- Which hostname acts as an alias

- Which servers receive email

- Which systems are allowed to send email for a domain

- Which certificate authorities may issue TLS certificates

- Which nameservers are responsible for the domain

- Which services exist under specific names

If DNS records are wrong, the result is rarely poetic. Websites stop loading. Email disappears into procedural fog. Certificates fail. Monitoring dashboards develop a sudden interest in the color red.

DNS records look small, but they carry adult responsibility.

A and AAAA records

The A record is the most basic DNS record. It maps a name to an IPv4 address.

example.com -> 192.0.2.10This record says, with refreshing directness, “This name lives at this IPv4 address.”

The AAAA record does the same job for IPv6.

example.com -> 2001:db8:1234::10A and AAAA records are common when you control the target IP address. For example, you may point a domain to a virtual machine, a static endpoint, or a load balancer with stable addresses.

In modern cloud environments, however, you often do not want to point directly to a single server. You may want to point to a load balancer, a CDN, or a managed service whose underlying IPs can change. That is where aliases and provider-specific features become important.

DNS is simple until cloud infrastructure arrives wearing three badges and carrying a YAML file.

CNAME records

A CNAME record creates an alias from one DNS name to another DNS name.

blog.example.com -> example-blog.provider.comThis does not work like an HTTP redirect. That distinction is important.

A browser redirect says, “Go to a different URL.”

A CNAME says, “This DNS name is really another DNS name. Ask about that one instead.”

It is not a forwarding service. It is an alias.

CNAME records are especially useful for subdomains. For example, you may point docs.example.com to a documentation platform, or shop.example.com to an e-commerce provider.

One important rule is that a CNAME normally cannot coexist with other records at the same name. If blog.example.com is a CNAME, it should not also have MX or TXT records at that exact same name. DNS dislikes identity crises.

Also, the root domain, often called the zone apex, such as example.com, usually cannot be a standard CNAME because it must have records like NS and SOA. Many DNS providers solve this with records called ALIAS or ANAME, or with provider-specific alias features.

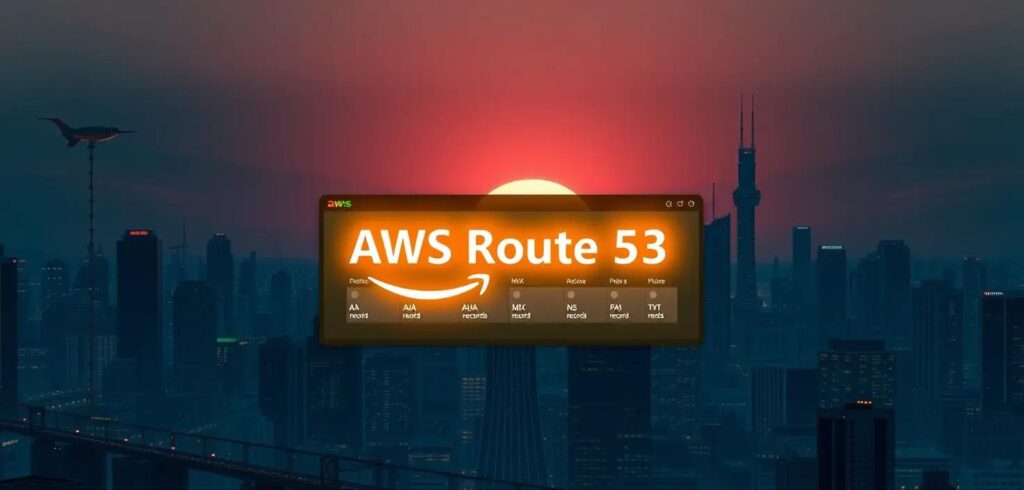

For example, AWS Route 53 has Alias records, which are not a normal DNS record type but are extremely useful when pointing a root domain to an AWS load balancer, CloudFront distribution, or another AWS target.

The practical lesson is simple. Use CNAMEs for aliases when allowed. Use your DNS provider’s supported alias mechanism when dealing with root domains and cloud-managed targets.

This is DNS saying, “There are rules, but we have invented paperwork to survive them.”

MX records

MX records tell the world where email for a domain should be delivered.

example.com -> mail.example.comIn real DNS, MX records also have priorities. Lower numbers are preferred.

example.com MX 10 mail1.example.com

example.com MX 20 mail2.example.comThis means mail servers should try mail1.example.com first, and use mail2.example.com as a fallback.

MX records matter because email is not delivered to your website. It is delivered to mail servers responsible for your domain. This is why a website can work perfectly while email is broken, and everyone involved can be technically correct while still being deeply unhappy.

Email uses DNS heavily. MX records route the mail. TXT records help prove which systems are allowed to send it. PTR records may help receiving systems trust the sending server. Email security is basically DNS wearing a trench coat full of paperwork.

TXT records

TXT records store text. That sounds harmless, like a sticky note, until you realize that half the modern internet uses sticky notes to prove ownership, configure email security, and convince platforms that you are not a spam goblin.

A common SPF record looks like this.

example.com TXT "v=spf1 include:_spf.google.com ~all"SPF helps define which systems are allowed to send email for a domain.

DKIM also uses DNS, usually through TXT records, to publish public keys that receiving mail systems use to verify email signatures.

DMARC uses DNS to define what receivers should do when SPF or DKIM checks fail.

A simplified DMARC record may look like this.

_dmarc.example.com TXT "v=DMARC1; p=quarantine; rua=mailto:dmarc@example.com"TXT records are also used for domain verification. Google, Microsoft, GitHub, certificate providers, and many SaaS platforms may ask you to create a TXT record to prove that you control a domain.

The humble TXT record is DNS with a clipboard and a suspicious number of compliance responsibilities.

NS and SOA records

NS records define which nameservers are authoritative for a domain or zone.

example.com NS ns1.provider.com

example.com NS ns2.provider.comWithout correct NS records, resolvers may not know where to ask for official answers. That is a problem, because DNS without authority is just gossip with port 53.

SOA stands for Start of Authority. Every DNS zone has an SOA record. It contains administrative information about the zone, including the primary nameserver, contact details, serial number, and timing values used by secondary nameservers.

You usually do not edit SOA records during basic DNS work, but they exist behind the scenes. They are the domain’s administrative birth certificate, stored in a filing cabinet that occasionally matters a lot.

PTR records and reverse DNS

Most DNS lookups turn names into IP addresses. A PTR record does the reverse. It maps an IP address back to a name.

192.0.2.10 -> server.example.comThis is called reverse DNS.

Reverse DNS is often used in email systems, logging, security investigations, and operational troubleshooting. If a mail server sends email from an IP address, receiving systems may check whether reverse DNS makes sense. If it does not, the email may look suspicious.

PTR records are usually managed by whoever controls the IP address range, often a cloud provider, hosting provider, or network team. This is why you may control example.com but still need to configure reverse DNS somewhere else.

DNS enjoys reminding us that ownership is a layered concept, like lasagna or enterprise access management.

SRV and CAA records

SRV records describe where specific services are available. They are often used by systems such as VoIP, chat, directory services, or service discovery mechanisms.

An SRV record can include the service name, protocol, priority, weight, port, and target host.

_service._tcp.example.com -> target.example.com on port 443Many people can use DNS for years without touching SRV records. Then one day a system requires them, and SRV appears like a cousin nobody mentioned during onboarding.

CAA records control which certificate authorities are allowed to issue TLS certificates for your domain.

example.com CAA 0 issue "letsencrypt.org"This tells certificate authorities that Let’s Encrypt is allowed to issue certificates for the domain. Other certificate authorities should not.

CAA is a useful security control. It is not a magic shield, but it reduces the risk of unauthorized certificate issuance. Think of it as a small velvet rope in front of your TLS certificates. Not glamorous, but better than letting the entire street into the building.

TTL and the myth of DNS propagation

TTL means Time To Live. It tells DNS resolvers how long they may cache a DNS answer.

If a record has a TTL of 3600 seconds, a resolver can cache that answer for one hour.

This is where many DNS misunderstandings are born, raised, and eventually promoted into incident reports.

People often say, “DNS propagation takes time.” The phrase is common, but it can be misleading. DNS changes are not usually pushed across the internet like flyers under apartment doors. Most of the time, you are waiting for cached answers to expire.

If a resolver cached the old IP address five minutes before you changed the record, and the TTL was one hour, that resolver may continue returning the old answer until the cache expires.

A low TTL can make changes appear faster, but it can also increase DNS query volume. A high TTL reduces query volume, but it makes mistakes more persistent.

This is the technical equivalent of writing something in permanent marker because it felt efficient at the time.

Before planned DNS changes, teams often lower TTL values in advance. For example, if a record currently has a TTL of 86400 seconds, which is 24 hours, you might reduce it to 300 seconds a day before migration. Then, when you switch the record, cached answers expire much faster.

After the migration is stable, you may increase the TTL again.

This is not exciting work. It is careful work. DNS rewards careful people by giving them fewer reasons to age visibly during production changes.

Common ways DNS breaks things

DNS failures are rarely introduced with dramatic music. They usually arrive disguised as simple user complaints.

“The website is down.”

“Email is not arriving.”

“It works from my machine.”

“The old environment is still receiving traffic.”

These are not always DNS problems, but DNS should be part of the investigation.

Common issues include these.

- An A record points to the wrong IP address.

- A CNAME points to the wrong target.

- A record was changed, but resolvers still have the old answer cached.

- Nameservers at the registrar do not match the DNS provider where records were edited.

- MX records are missing or misconfigured.

- TXT records for SPF, DKIM, or DMARC are incomplete.

- A certificate authority cannot issue a certificate because CAA records block it.

- Internal and external DNS return different answers, and nobody documented the difference because optimism is cheaper than documentation.

A particularly common mistake is editing DNS records in the wrong place. The domain may be registered with one company, but the authoritative DNS may be hosted somewhere else. Changing records at the registrar will do nothing if the authoritative nameservers point to another DNS provider.

This is how people end up pressing Save repeatedly in a web console while DNS stares politely from another building.

DNS in cloud and DevOps

For cloud, DevOps, and platform engineering work, DNS is not optional background noise. It is where architecture becomes reachable.

A Kubernetes Ingress may expose an application through a cloud load balancer. DNS must point the application hostname to that load balancer.

A CDN such as CloudFront or Cloud CDN may sit in front of an application. DNS must point users toward the CDN, not directly to the origin.

A managed database, API gateway, object storage website, or SaaS platform may require CNAMEs, TXT verification records, private endpoints, or provider-specific aliases.

In AWS, Route 53 Alias records are commonly used to point domains to AWS resources such as Application Load Balancers or CloudFront distributions.

In GCP, Cloud DNS can host public or private zones, and DNS can be part of the design for internal services, private connectivity, and hybrid architectures.

In Kubernetes, internal DNS also matters. Services get names inside the cluster. Pods can call other services using names such as this.

my-service.my-namespace.svc.cluster.localThat internal DNS is different from public DNS, but the idea is related. Names hide moving parts. Services can change IP addresses. Pods can die and be replaced. DNS gives workloads a stable name to use while the infrastructure performs its little disappearing act.

Cloud architecture is full of things that move, scale, fail, restart, and get replaced. DNS is one of the systems that lets users pretend this is all very stable.

Bless DNS for its emotional labor.

Useful DNS troubleshooting commands

You do not need many tools to begin troubleshooting DNS. A few commands can reveal a lot.

Use dig to query DNS records.

dig example.com AQuery a specific resolver.

dig @8.8.8.8 example.com ACheck MX records.

dig example.com MXCheck TXT records.

dig example.com TXTTrace the delegation path.

dig example.com +traceUse nslookup if it is what you have available.

nslookup example.comUse host for quick lookups.

host example.comFor operational troubleshooting, compare answers from different resolvers. Your corporate DNS, Google DNS, Cloudflare DNS, and the authoritative nameserver may not all return the same answer at the same time, especially after a recent change.

That does not always mean DNS is broken. Sometimes it means DNS is being DNS, which is not comforting, but it is accurate.

When the receptionist leaves the desk

DNS is one of those technologies that feels simple until you need to explain why production traffic is still going to the old load balancer, why email authentication broke after a migration, or why half the office sees the new website, and the other half appears trapped in yesterday.

At its heart, DNS turns names into answers.

But in real systems, those answers are cached, delegated, aliased, verified, prioritized, and sometimes misfiled in a place nobody checked because the meeting was already running long.

If you work with Linux, cloud, Kubernetes, DevOps, security, networking, or web platforms, DNS is not optional. It is one of the quiet foundations underneath everything else.

It does not look dramatic on architecture diagrams. It does not usually get its own epic in Jira. It does not wear a cape. It sits at the desk, answers questions, points traffic in the right direction, and receives blame with the exhausted dignity of someone who has been doing everyone else’s routing work for decades.

DNS is the internet’s most underpaid receptionist.

And when that receptionist goes missing, nobody gets into the building.