Creating your first AWS account is a modern rite of passage.

It feels like you’ve just been handed the keys to a digital kingdom, a shiny, infinitely powerful box of LEGOs. You log into that console, see that universe of 200+ services, and think, “I have control.”

In reality, you’ve just volunteered to be the kingdom’s chief plumber, electrician, structural engineer, and sanitation officer, all while juggling the royal budget. And you only wanted to build a shop to sell t-shirts.

For years, we in the tech world have accepted this as the default. We believed that “cloud-native” meant getting your hands dirty. We believed that to be a “real” engineer, you had to speak fluent IAM JSON and understand the intimate details of VPC peering.

Let’s be honest with ourselves. In 2025, meticulously managing your own raw AWS infrastructure isn’t a competitive advantage. It’s an anchor. It’s the equivalent of insisting on milling your own flour and churning your own butter just to make a sandwich.

It’s time to call it what it is: the new technical debt.

The seduction of total control

Why did we all fall for this? Because “control” is a powerfully seductive idea.

We were sold a dream of infinite knobs and levers. We thought, “If I can configure everything, I can optimize everything!” We pictured ourselves as brilliant cloud architects, seated at a vast console, fine-tuning the global engine of our application.

But this “control” is a mirage. What it really means is the freedom to spend a Tuesday afternoon debugging why a security group is blocking traffic, or the privilege of becoming an unwilling expert on data transfer pricing.

It’s not strategic control; it’s janitorial control. And it’s costing us dearly.

The three-headed monster of ‘Control’

When you sign up for that “control,” you unknowingly invite a three-headed monster to live in your office. It doesn’t ask for rent, but it feeds on your time, your money, and your sanity.

1. The labyrinth of accidental complexity

You just want to launch a simple web app. How hard can it be?

Famous last words.

To do it “properly” in a raw AWS account, your journey looks less like engineering and more like an archaeological dig.

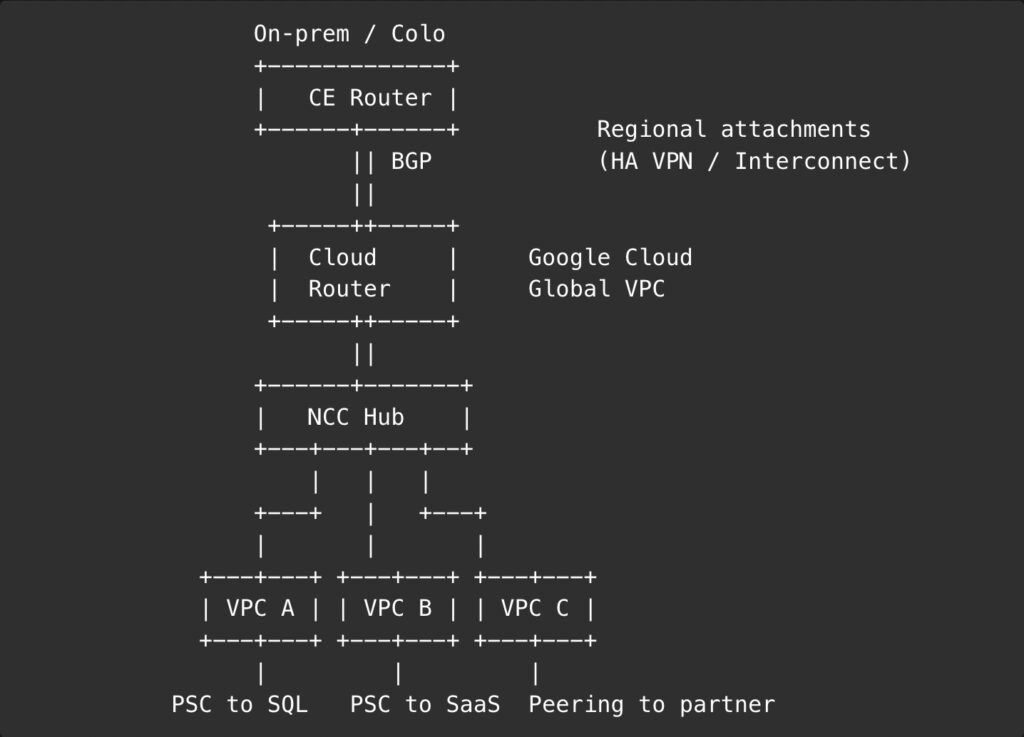

First, you must enter the dark labyrinth of VPCs, subnets, and NAT Gateways, a plumbing job so complex it would make a Roman aqueduct engineer weep. Then, you must present a multi-page, blood-signed sacrifice to the gods of IAM, praying that your policy document correctly grants one service permission to talk to another without accidentally giving “Public” access to your entire user database.

This is before you’ve even provisioned a server. Want a database? Great. Now you’re a database administrator, deciding on instance types, read replicas, and backup schedules. Need storage? Welcome to S3, where you’re now a compliance officer, managing bucket policies and lifecycle rules.

What started as building a house has turned into you personally mining the copper for the wiring. The complexity isn’t a feature; it’s a bug.

2. The financial hemorrhage

AWS pricing is perhaps the most compelling work of high-fantasy fiction in modern times. “Pay for what you use” sounds beautifully simple.

It’s the “use” part that gets you.

It’s like a bar where the drinks are cheap, but the peanuts are $50, the barstool costs $20 an hour, and you’re charged for the oxygen you breathe.

This “control” means you are now the sole accountant for a thousand tiny, running meters. You’re paying for idle EC2 instances you forgot about, unattached EBS volumes that are just sitting there, and NAT Gateways that cheerfully process data at a price that would make a loan shark blush.

And let’s talk about data transfer. That’s the fine print, written in invisible ink, at the bottom of the contract. It’s the silent killer of cloud budgets, the gotcha that turns your profitable month into a financial horror movie.

Without a full-time “Cloud Cost Whisperer,” your bill becomes a monthly lottery where you always lose.

3. The developer’s schizophrenia

The most expensive-to-fix part of this whole charade is the human cost.

We hire brilliant software developers to build brilliant products. Then, we immediately sabotage them by demanding they also be expert network engineers, security analysts, database administrators, and billing specialists.

The modern “Full-Stack Developer” is now a “Full-Cloud-Stack-Network-Security-Billing-Analyst-Developer.” The cognitive whiplash is brutal.

One moment you’re deep in application logic, crafting an algorithm, designing a user experience, and the next, you’re yanked out to diagnose a slow-running SQL query, optimize a CI/CD pipeline, or figure out why the “simple” terraform apply just failed for the fifth time.

This isn’t “DevOps.” This is a frantic one-person show, a short-order cook trying to run a 12-station Michelin-star kitchen alone. The cost of this context-switching is staggering. It’s the death of focus. It’s how great products become mediocre.

What we were all pretending not to want

For years, we’ve endured this pain. We’ve worn our complex Terraform files and our sprawling AWS diagrams as badges of honor. It was a form of intellectual hazing.

But what if we just… stopped?

What if we admitted what we really want? We don’t want to configure VPCs. We want our app to be secure and private. We don’t want to write auto-scaling policies. We want our app to simply not fall over when it gets popular.

We don’t want to spend a week setting up a deployment pipeline. We just want to git push deploy.

This isn’t laziness. This is sanity. We’ve finally realized that the business value isn’t in the plumbing; it’s in the water coming out of the tap.

The glorious liberation of abstraction

This realization has sparked a revolution. The future of cloud computing is, thankfully, becoming gloriously boring.

The new wave of platforms, PaaS, serverless environments, and advanced, opinionated frameworks, are built to do one thing: handle the plumbing so you don’t have to.

They run on top of the same powerful AWS (or GCP, or Azure) foundation, but they present you with a contract that makes sense. “You give us code,” they say, “and we’ll run it, scale it, secure it, and patch it. Go build your business.”

This isn’t a dumbed-down version of the cloud. It’s a sane one. It’s an abstraction layer that treats infrastructure like the utility it was always supposed to be.

Think about your home’s electricity. You just plug in your toaster and it works. You don’t have to manage the power plant, check the voltage on the high-tension wires, or personally rewire the neighborhood transformer. You just want toast.

The new platforms are finally letting us just make toast.

So what’s the sane alternative

“Abstraction” is a lovely, comforting word. But it’s also vague. It sounds like magic. It isn’t. It’s just a different set of trade-offs, where you trade the janitorial control of raw AWS for the productive speed of a platform that has opinions.

And it turns out, there’s an entire ecosystem of these “sane alternatives,” each designed to cure a specific infrastructure-induced headache.

- The Frontend valet service (e.g., Vercel, Netlify):

This is the “I don’t even want to know where the server is” approach. You hand them your Next.js or React repo, and they handle everything else: global CDN, CI/CD, caching, serverless functions. It’s the git push dream realized. You’re not just getting a toaster; you’re getting a personal chef who serves you perfect toast on a silver platter, anywhere in the world, in 100 milliseconds. - The backend butler (e.g., Supabase, Firebase, Appwrite):

Remember the last time you thought, “You know what would be fun? Building user authentication from scratch!”? No, you didn’t. Because it’s a nightmare. These “Backend-as-a-Service” platforms are the butlers who handle the messy stuff, database provisioning, auth, file storage, so you can focus on the actual party (your app’s features). - The “furniture, but assembled” (e.g., Render, Railway, Heroku):

This is the sweet spot for most full-stack apps. You still have your Dockerfile (you know, the “instructions”), but you’re not forced to build the furniture yourself with a tiny Allen key (that’s Kubernetes). You give them a container, they run it, scale it, and even attach the managed database for you. It’s the grown-up version of what we all wished infrastructure was. - The tamed leviathan (e.g., GKE Autopilot, EKS on Fargate):

Okay, so your company is massive. You need the raw, terrifying power of Kubernetes. Fine. But you still don’t have to build the nuclear submarine yourself. These services are the “hire a professional crew” option. You get the power of Kubernetes, but Google or Amazon’s own engineers handle the patching, scaling, and 3 AM “node-is-down” panic attacks. You get to be the Admiral, not the guy shoveling coal in the engine room.

Stop building the car and just drive

Managing your own raw AWS account in 2025 is the very definition of technical debt. It’s an unhedged, high-interest loan you took out for no good reason, and you’re paying it off every single day with your team’s time, focus, and morale.

That custom-tuned VPC you spent three weeks on? It’s not your competitive advantage. That hand-rolled deployment script? It’s not your secret sauce.

Your product is your competitive advantage. Your user experience is your secret sauce.

The industry is moving. The teams that win will be the ones that spend less time tinkering with the engine and more time actually driving. The real work isn’t building the Rube Goldberg machine; it’s building the thing the machine is supposed to make.

So, for your own sanity, close that AWS console. Let someone else manage the plumbing.

Go build something that matters.