The pager goes off at 3 AM. Your most critical Kubernetes node is gasping for air. You SSH into the box, but your fancy cloud observability agents are completely frozen. You cannot run top, htop is a distant dream, and your metrics dashboard is just a spinning loading wheel of despair.

What do you do now?

Most people panic. But if you know where to look, your Linux server has a secret, real-time dashboard built right in. It requires zero agents, consumes zero disk space, and is literally generating its data on the fly just for you.

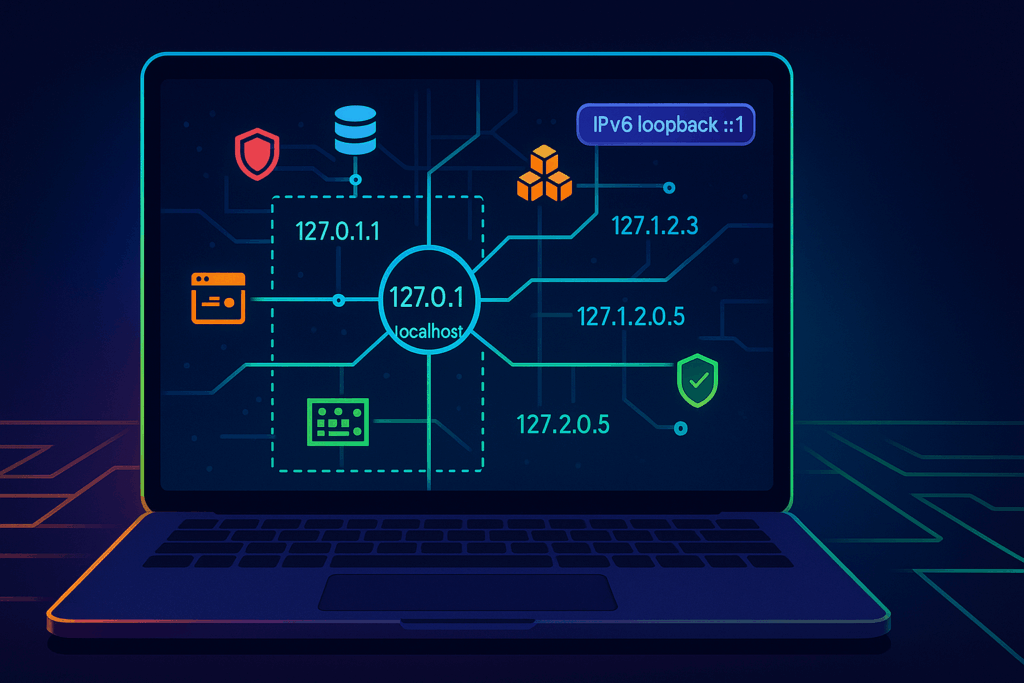

Welcome to the weird, wonderful, and slightly chaotic world of the proc pseudo-filesystem.

The hallucinated filesystem

If you run ls /proc, you will see what looks like a messy drawer full of text files and numbered directories. It is easy to dismiss it as legacy kernel clutter. But here is the bizarre truth about this directory. It does not exist.

Not on your SSD, anyway. The proc filesystem is a pure hallucination managed by the kernel. It exists entirely in RAM. The files inside it have a size of zero bytes right up until the exact microsecond you try to read them. When you run cat /proc/uptime, the kernel intercepts your request, hastily scribbles down the current system state, and hands it back to you.

It is the “everything is a file” Unix philosophy taken to its absolute, absurd logical conclusion. And once you understand how to read it, it becomes an indispensable tool for Cloud Architecture and DevOps engineers.

Gold nuggets for your daily rotation

You do not need to memorize every file in here. Treat it like a hardware store. You only need to know where the hammers and screwdrivers are kept.

The memory health check

Checking /proc/meminfo gives you your memory health at a glance, long before you even try to execute free -h. It is the raw, unfiltered truth about your RAM.

The CPU heartbeat

You can check /proc/loadavg and /proc/stat to understand CPU load and scheduler activity. Load average is like looking at the queue outside a nightclub. It tells you how many processes are waiting to get onto the CPU dance floor.

The network socket inventory

When you are trapped inside a stripped-down Docker container that lacks ss or netstat, /proc/net/tcp and /proc/net/udp are your best friends. They list every active socket connection.

The runtime clock

A quick look at /proc/uptime gives you the system runtime and idle time in a single line. It is incredibly easy to parse for quick uptime checks in your automation scripts.

Peeking inside running applications

If the root of this filesystem is the global state of the machine, the numbered directories are the personal diaries of every running application. Each number corresponds to a Process ID.

Finding the exact command

Sometimes, ps truncates output or is not installed. You can read /proc/<pid>/cmdline to see the exact, literal command that launched the process, null bytes and all.

Reading the environment

Checking /proc/<pid>/environ reveals the environment variables the process started with. It is an absolute goldmine for debugging and a terrifying danger zone for security. Environment variables are like a bouncer who will not let the application start unless its name is on the list, and the application brought the list itself.

Chasing file descriptors

If you ever hit a “too many open files” error, look inside /proc/<pid>/fd/. This directory contains symlinks to every single file, socket, and pipe the application is currently holding onto.

Surviving the cloud native illusion

Containers are, fundamentally, just Linux processes lying to themselves about how much of the world they own. They think they are the only tenant in the building. When you are working with Kubernetes, this pseudo-filesystem bridges the gap between the illusion and the reality.

When eBPF tools or your sidecar agents fail, this interface is your manual override. You can check /proc/<pid>/cgroup to see exactly which control groups are clamping down on your process. If a container keeps getting killed by the Out Of Memory killer, you can watch /proc/<pid>/oom_score to see how angry the kernel is getting at that specific process. The higher the number, the more likely the kernel is going to take it out back and end its misery.

War stories from the trenches

Theoretical knowledge is great, but let us look at how this saves your skin when you are sleep deprived.

The phantom disk filler

Your alerts say the disk is at 100%. You find a massive 50GB application log and delete it. You run df -h again. The disk is still at 100%. What happened? The application is still writing to the deleted file. A file is not truly deleted until the last process closes it. Running lsof or digging through /proc/<pid>/fd will show you the deleted file still held open by the stubborn process. Restart the process, and your 50GB magically returns.

The frozen startup

An application hangs immediately on startup. It is not using CPU, and it is not crashing. What is it waiting for? Inspecting /proc/<pid>/wchan will literally tell you the exact kernel function where the process went to sleep.

The dark side of the dashboard

It is not all sunshine and perfectly formatted data. There are traps here.

First, formatting varies between kernel versions. Writing a strict regular expression to parse these files in a production bash script is a recipe for tears. Always use defensive coding.

Second, the /proc/sys/ directory is not just for looking. It is for touching. This is where kernel tunables live, the underlying mechanism for sysctl. Writing the wrong value here can permanently break your network stack or cause a kernel panic faster than you can hit Ctrl+C. Look, but do not touch unless you have read the documentation twice.

Quick reference sheet

Keep this list handy for your next terminal session.

- cat /proc/cpuinfo shows your hardware details

- cat /proc/version gives you the exact kernel and distro info

- ls -l /proc/<pid>/fd displays live file descriptors

- cat /proc/net/dev reveals network interface stats

- echo 3 > /proc/sys/vm/drop_caches frees up pagecache, dentries, and inodes (and makes your database administrator incredibly nervous)

Keep a terminal open to your kernel

This interface is the universal API. It is present when your monitoring tools are broken, when your containers are stripped bare, and when the orchestrator is lying to you.

Next time you SSH into a server or run kubectl exec into a pod, take a second to explore this directory before you reflexively type htop. In the cloud, understanding this in-memory filesystem means you understand exactly what your platform sees. And that is the kind of visibility no vendor can sell you.