Last week, someone in your LinkedIn feed posted about being thrilled to build AI agents over the weekend. The post had forty-seven likes, a handful of rocket emojis, and several comments praising their growth mindset. You stared at the screen and felt a familiar, dull panic in your gut. It was not inspiration. It was the exact same feeling you get when you watch someone pretend to genuinely enjoy a room-temperature kale and gravel smoothie.

Nobody with a healthy central nervous system is genuinely thrilled to learn prompt engineering frameworks on a Saturday morning. They are just terrified of what happens to their mortgage if they do not.

You probably have your own personal monument to this anxiety. It is a browser tab you keep meaning to open. A course you bought during a Black Friday panic sale and never started. A corporate Slack thread about AI readiness that you skimmed, starred, and immediately buried under a pile of actual work. It is the quiet admission that you do not know enough to stay relevant, paired with the even quieter admission that simply bookmarking the resource made you feel slightly less like a dinosaur.

You have been writing production code, configuring infrastructure, and surviving catastrophic deployment rollbacks for years. By most reasonable measures, you know exactly what you are doing. And yet, that browser tab sits there. It is a digital talisman against obsolescence.

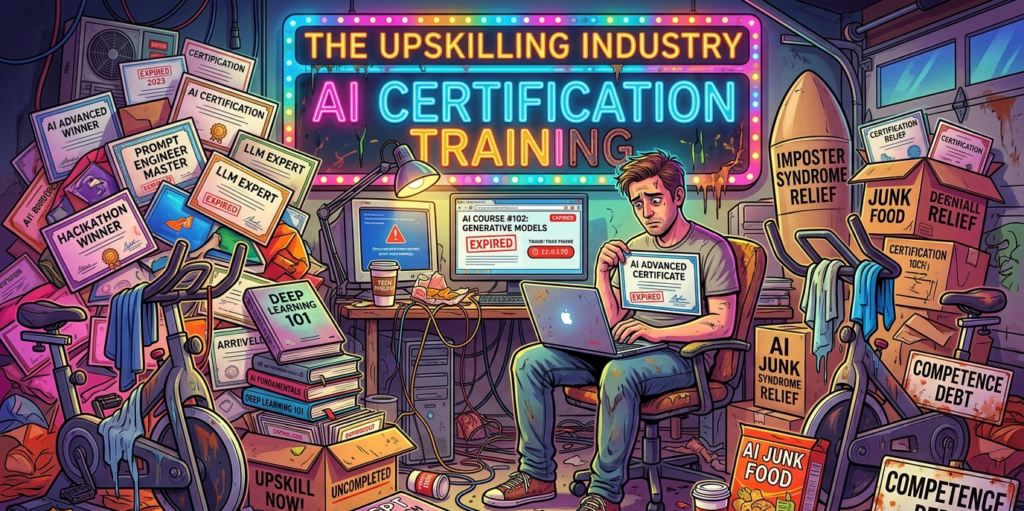

There is a name for this modern condition. I call it competence debt. It is the silent, creeping rot that happens when you trade durable mastery for perishable certifications. And an entire multi-billion-dollar upskilling industry is banking on you never figuring out the difference.

The ecosystem of the forgotten browser tab

That Udemy or Coursera tab has been open in your browser for so long that it has practically developed its own microbial ecosystem. It sits there, glowing faintly between Jira and Slack, judging you with the silent, suffocating disappointment of a stationary bicycle that you now use exclusively for drying wet dress shirts.

You will click it eventually. You will watch the first module at 1.5x speed. Not because the course will teach you something deeply structural about computer science. Not because it will make you meaningfully better at the architectural work that actually keeps your company afloat. You will do it because the credential economy demands constant proof of currency, and currency is exactly what expires.

Buying a deeply discounted course on the latest Large Language Model API is not the acquisition of knowledge. It is the purchase of a psychological suppository for imposter syndrome. You administer it, you feel a warm rush of proactive professional development for exactly twelve minutes, and for the rest of the quarter, the only thing you actually retain is a PDF certificate and a vague, persistent sense of guilt.

This is the business model. The upskilling industry operates exactly like a budget gym in January. They do not want you to use the equipment. If everyone who bought a tech course actually logged in, the servers would melt. The industry relies on the fact that an astonishing ninety percent of Massive Open Online Courses are never completed. They are selling you the sensation of having done something about your career anxiety without the caloric expenditure of actually doing it.

Selling suppositories for imposter syndrome

The pressure does not just come from the manic performance art of LinkedIn. It comes from inside the house.

One morning, you get an email from HR about a new corporate AI readiness initiative. The phrasing strongly suggests that participation is voluntary. Of course it is. It is voluntary in the same way that handing over your wallet to a nervous man holding a broken bottle in a dark alley is voluntary. You do not have to do it, but the alternative involves a lot of messy paperwork and a sudden career transition.

Companies love these initiatives because they are trackable. You can put a dashboard on a PowerPoint slide and show the board of directors that eighty percent of the engineering department has been upskilled.

But Gartner research shows that nearly half of all corporate training is what they elegantly call scrap learning. This is knowledge that is delivered but never actually applied to the job. It is corporate junk food. You spend three hours learning how to write the perfect prompt for a proprietary AI tool, and by the time your performance review rolls around, the tool has been deprecated, the vendor has pivoted to a different business model, and you are still just trying to figure out why the production database is locking up every Tuesday at 3 PM.

Early in your career, you learned a new technology because it was genuinely exciting. It provided a new mental model for building things. You stayed up late reading documentation, not because a middle manager sent you a calendar invite, but because you could not stop thinking about the possibilities. The learning felt like building an extension onto a house you were just beginning to inhabit.

Now, you open an AI course because your company panicked after reading a Forbes article. The curiosity has been entirely surgically removed, replaced by the grim mechanics of survival.

The shelf life of a prompt engineer

Here is the fundamental trick the training industry plays on us. They conflate perishable knowledge with durable skill.

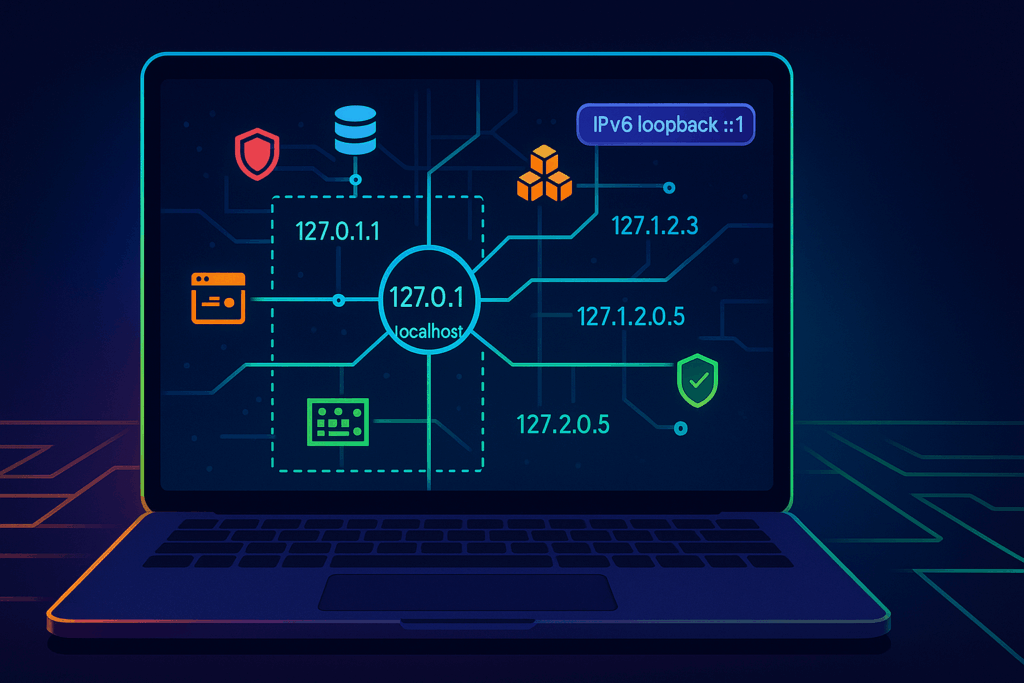

Perishable knowledge has the shelf life of an unrefrigerated avocado. It is the exact syntax for a specific API that will change completely in version two. It is a list of magic words to trick a specific chatbot into ignoring its safety constraints. It is knowing how to navigate the user interface of a cloud vendor dashboard that is scheduled for a total redesign next month.

Durable skill is entirely different. A durable skill is understanding how relational databases handle concurrency. It is the ability to read a latency graph like a seasoned cardiologist reads an electrocardiogram, instantly spotting the flutter of a failing network switch. It is knowing how to design a system that fails gracefully instead of taking the entire company down with it. It is the agonizing, hard-won intuition of knowing when an external vendor is lying to you about their uptime guarantees.

Durable skills do not look good on a digital badge. You cannot take a weekend course on how to develop a gut feeling about a poorly designed architecture. It takes years of getting burned by bad code, surviving late-night outages, and staring at logs until your eyes bleed.

The tragedy of the current AI hype cycle is that it forces brilliant engineers to abandon their compounding, durable skills to chase perishable trivia. It is like telling a master carpenter to drop his tools and spend three months learning how to optimize the instruction manual for an automated nail gun.

Compounding interest in the wrong direction

This brings us back to competence debt.

Every hour you spend forcing yourself to memorize the transient, undocumented quirks of an AI wrapper is an hour you did not spend deeply understanding the legacy systems you are actually paid to keep alive. Every superficial certificate you collect is a minimum payment on a debt of fundamental knowledge that keeps growing in the background.

You look productive. Your corporate training dashboard is completely green. Your profile is heavily peppered with the right buzzwords. But underneath it all, the foundational skills that would actually make you irreplaceable are quietly rusting from neglect.

The industry has taught us to call this frantic hamster wheel growth. The corporate rubrics and performance metrics were meticulously designed to measure it. But the word we are all actually looking for is depreciation.

It is perfectly fine to ignore that browser tab. Let the microbial ecosystem thrive. Close the tab. Close the guilt. The next time you feel the panic rising when someone posts about their weekend AI project, take a deep breath. Remember that the ability to keep a messy, chaotic, real-world system running is a skill that no weekend bootcamp can teach.

Stop buying their expired anxiety, and go back to doing the real work.