A surprising number of AI platforms begin life with a question that sounds reasonable in a standup and catastrophic in a postmortem, something along the lines of “Can we just stick a GPU behind an API?” You can. You probably shouldn’t. AI workloads are not ordinary web services wearing a thicker coat. They behave differently, fail differently, scale differently, and cost differently, and an architecture that ignores those differences will eventually let you know, usually on a Sunday.

This article is not about how to train a model. It is about building an AWS architecture that can host AI workloads safely, scale them reliably, and keep the monthly bill within shouting distance of the original estimate.

Why AI workloads change the architecture conversation

Treating an AI workload as “the same thing, but with bigger instances” is a classic and very expensive mistake. Inference latency matters in milliseconds. Accelerator choice (GPU, Trainium, Inferentia) affects both performance and invoice. Traffic spikes are unpredictable because humans, not schedulers, trigger them. Model lifecycle and data lineage become first-class design concerns. Governance stops being a compliance checkbox and becomes the seatbelt that keeps sensitive information from ending up inside a prompt log.

Put differently, AI adds several new axes of failure to the usual cloud architecture, and pretending otherwise is how teams rediscover the limits of their CloudWatch alerting at 3 am.

Start with the use case, not the model

Before anyone opens the Bedrock console, the first design decision should be the business problem. A chatbot for internal knowledge, a document summarization pipeline, a fraud detection scorer, and an image generation service have almost nothing in common architecturally, even if they all happen to involve transformer models.

From the use case, derive the architectural drivers (latency budget, throughput, data sensitivity, availability target, model accuracy requirements, cost ceiling). These drivers decide almost everything else. The opposite workflow, picking the infrastructure first and then seeing what it can do, is how you end up with a beautifully optimized cluster solving a problem nobody asked about.

Choosing your AI path on AWS

AWS offers several paths, and they are not interchangeable. A rough guide.

Amazon Bedrock is the right choice when you want managed foundation models, guardrails, agents, and knowledge bases without running the model infrastructure yourself. Good for teams that want to ship features, not operate GPUs.

Amazon SageMaker AI is the right choice when you need more control over training, deployment, pipelines, and MLOps. Good for teams with ML engineers who enjoy that sort of thing. Yes, they exist.

AWS accelerator-based infrastructure (Trainium, Inferentia2, SageMaker HyperPod) is the right choice when cost efficiency or raw performance at scale becomes the dominant constraint, typically for custom training or large-scale inference.

The common mistake here is picking the most powerful option by default. Bedrock with a sensible model is usually cheaper to operate than a custom SageMaker endpoint you forgot to scale down over Christmas.

The data foundation comes first

AI systems are a thin layer of cleverness on top of data. If the data layer is broken, the AI will be confidently wrong, which is worse than being uselessly wrong because people tend to believe it.

Answer the unglamorous questions first. Where does the data live? Who owns it? How fresh does it need to be? Who can see which parts of it? For generative AI workloads that use retrieval, add more questions. How are documents chunked? What embedding model is used? Which vector store? What metadata accompanies each chunk? How is the index refreshed when the source changes?

A poor data foundation produces a poor AI experience, even when the underlying model is state of the art. Think of the model as a very articulate intern; it will faithfully report whatever you put in front of it, including the typo in the policy document from 2019.

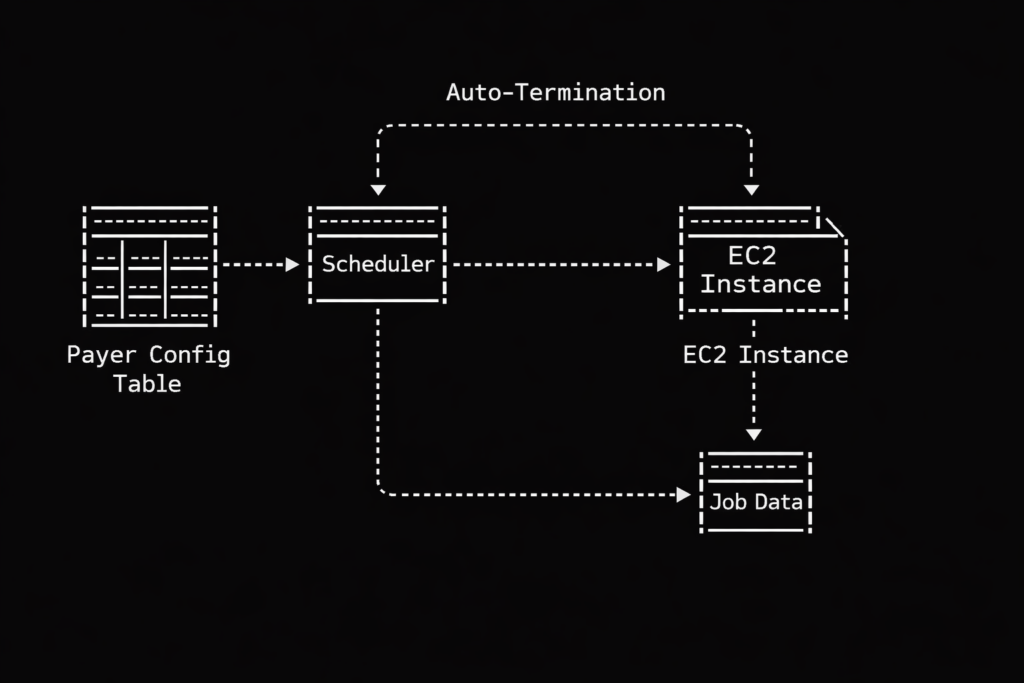

Designing compute for reality, not for demos

Training and inference are not the same workload and should rarely share the same architecture. Training is bursty, expensive, and tolerant of scheduling. Inference is steady, latency-sensitive, and intolerant of downtime. A single “AI cluster” that tries to do both tends to be bad at each.

For inference, focus on right-sizing, dynamic scaling, and high availability across AZs. For training, focus on ephemeral capacity, checkpointing, and data pipeline throughput. For serving large models, consider whether Bedrock’s managed endpoints remove enough operational burden to justify their pricing compared to self-hosted inference on EC2 or EKS with Inferentia2.

And please, autoscale. A fixed-size fleet of GPU instances running at 3% utilization is a monument to optimism.

Treating inference as a production workload

Many AI articles spend chapters on models and a paragraph on serving them, which is roughly the opposite of how the effort is distributed in real projects. Inference is where the workload meets reality, and reality brings concurrency, timeouts, thundering herds, and users who click the retry button like they are trying to start a stubborn lawnmower.

Plan for all of it. Set timeouts. Configure throttling and quotas. Add rate limiting at the edge. Use exponential backoff. Put circuit breakers between your application tier and your AI tier so a slow model does not take the whole product down. AWS explicitly recommends rate limiting and throttling as part of protecting generative AI systems from overload, and they recommend it because they have seen what happens without it.

Protecting inference is not mainly about safety. It is about surviving the traffic spike after your launch gets a mention somewhere popular.

Separating application, AI, and data responsibilities

A quietly important architectural point is that the AI tier should not share an account, an IAM boundary, or a blast radius with the application that calls it. AWS security guidance increasingly points toward separating the application account from the generative AI account. The reasoning is simple: the consequences of a mistake in prompt construction, data retrieval, or model output are different from the consequences of a mistake in, say, a shopping cart service, and they deserve different controls.

Think of it as the organizational version of not keeping your passport in the same drawer as your house keys. If one goes missing, the other is still where it should be.

Security and guardrails from day one

AI-specific controls sit on top of the usual cloud security hygiene (IAM least privilege, encryption at rest and in transit, VPC endpoints, logging, data classification). On top of that, you need approved model catalogues so teams cannot quietly wire up any foundation model they saw on Hacker News, prompt governance with templates and input validation and logging policies that do not accidentally store sensitive data forever, output filtering for harmful content and PII leakage and jailbreak attempts, and clear data classification policies that decide which data is allowed to reach which model.

For Bedrock-based systems, Amazon Bedrock Guardrails offer configurable safeguards for harmful content and sensitive information. They are not magic, but they save a surprising amount of custom work, and custom work in this area tends to age badly.

Governance is not bureaucracy. Governance is what lets your AI feature get through a security review without being rewritten twice.

Protecting the retrieval layer when you use RAG

Retrieval-augmented generation is often described as “LLM plus documents”, which is technically true and practically misleading. A production RAG system involves ingestion pipelines, embedding generation, a vector store, metadata design, and ongoing synchronization with source systems. Each of those is a place where things can quietly go wrong.

One specific point is worth emphasizing. User identity must propagate to the retrieval layer. If Alice asks a question, the knowledge base should only return chunks Alice is allowed to see. AWS guidance recommends enforcing authorization through metadata filtering so users only get results they have access to. Without this, your RAG system will happily summarize the CFO’s compensation memo for the summer intern, which is the sort of thing that gets architectures shut down by email.

Observability goes beyond CPU and memory

Traditional observability (CPU, memory, latency, error rates) is necessary but insufficient for AI workloads. For these systems, you also want to track model quality and drift over time, retrieval quality (are the right chunks being returned?), prompt behavior and common failure modes, token usage per request and per tenant and per feature, latency per model and not just per service, and user feedback signals, with thumbs-up and thumbs-down being the cheapest useful telemetry ever invented.

Amazon Bedrock provides evaluation capabilities, and SageMaker Model Monitor covers drift and model quality in production. Use them. If you run your own inference, budget time for custom metrics, because the default dashboards will tell you the endpoint is healthy right up until users stop trusting its answers.

AI operations is not a different discipline. It is mature operations thinking applied to a stack where “the service works” and “the service is useful” are two different statements.

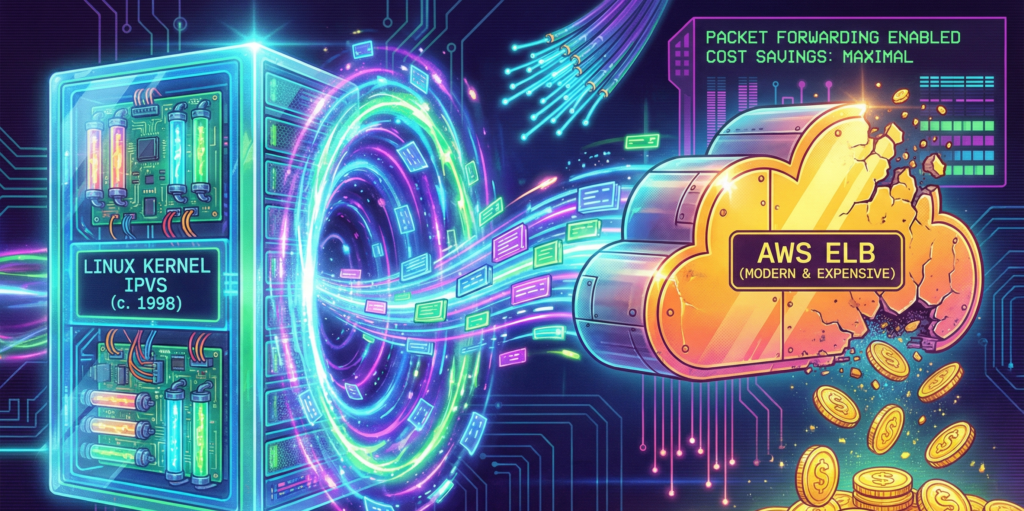

Cost optimization belongs in the first draft

Cost should be a design constraint, not a debugging session six weeks after launch. The biggest levers, roughly in order of impact.

Model choice. Smaller models are cheaper and often good enough. Not every feature needs the largest frontier model in the catalogue.

Inference mode. Real-time endpoints, batch inference, serverless inference, and on-demand Bedrock invocations have wildly different cost profiles. Match the mode to the traffic pattern, not the other way around.

Autoscaling policy. Scale to zero where possible. Keep the minimum capacity honest.

Hardware choice. Inferentia2 and Trainium are positioned specifically for cost-effective ML deployment, and they often deliver on that positioning.

Batching. Batching inference requests can dramatically improve throughput per dollar for workloads that tolerate small latency increases.

A common failure mode is the impressive prototype with the terrifying monthly bill. Put cost targets in the design document next to the latency targets, and revisit both before go-live.

Close with an operating model, not just a diagram

An architecture diagram is the opening paragraph of the story, not the whole book. What makes an AI platform sustainable is the operating model around it (versioning, CI/CD or MLOps/LLMOps pipelines, evaluation suites, rollback strategy, incident response, and clear ownership between platform, data, security, and application teams).

AWS guidance for enterprise-ready generative AI consistently stresses repeatable patterns and standardized approaches, because that is what turns successful experiments into durable platforms rather than fragile demos held together by one engineer’s tribal knowledge.

What separates a platform from a demo

Preparing a cloud architecture for AI on AWS is not mainly about buying GPU capacity. It is about designing a platform where data, models, security, inference, observability, and cost controls work together from the start. The teams that do well with AI are not the ones with the biggest clusters; they are the ones who took the boring parts seriously before the interesting parts broke.

If your AI architecture is running quietly, scaling predictably, and costing roughly what you expected, congratulations, you have done something genuinely difficult, and nobody will notice. That is always how it goes.