First up, let’s shine a spotlight on these two powerhouses:

- AWS IAM (Identity and Access Management): Picture this as the ultimate bouncer at the hottest club in town; let’s call it Club AWS. AWS IAM is all about who gets into the VIP section: those precious AWS resources like EC2 instances, S3 buckets, and Lambda functions. It’s your tool to create users, assemble groups, and wield permissions with the precision of a laser beam, deciding who can enter and what they can touch.

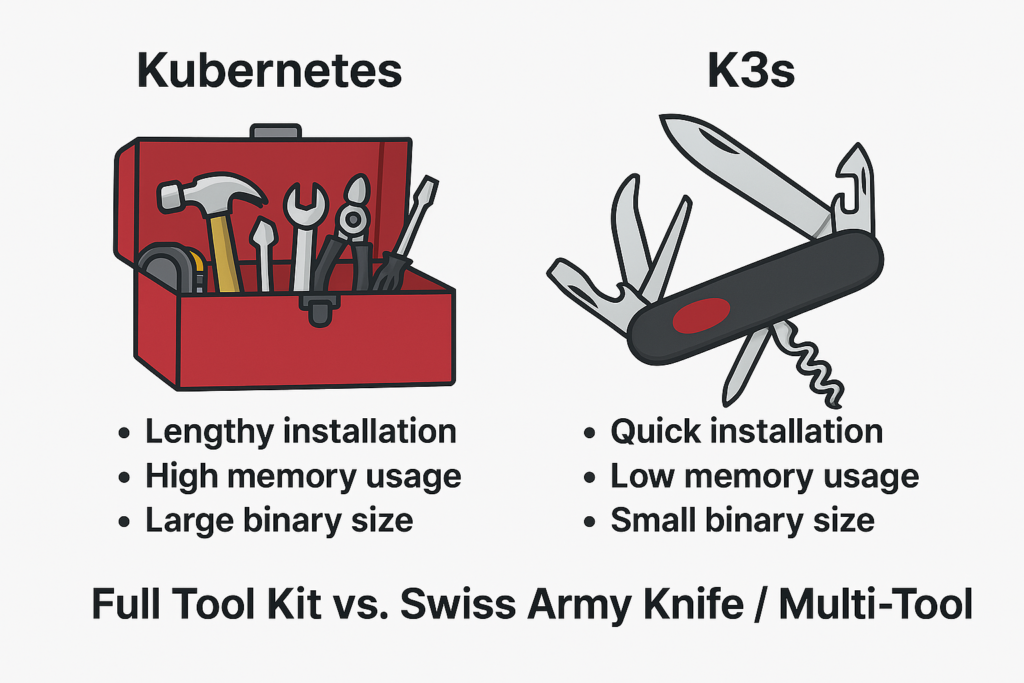

- Azure AD (Active Directory): Now, imagine a super-bouncer with a clipboard that covers not just one club but an entire network of venues. Azure AD is Microsoft’s cloud-based identity maestro, managing access across a sprawling galaxy of services, think Office 365, Azure itself, and even thousands of third-party apps. It’s the Swiss Army knife of identity management, juggling credentials like a cosmic DJ spinning tracks for the multiverse.

The cosmic differences

So, what sets these two apart? Let’s break it down into bite-sized, star-sized chunks:

- Scope: AWS IAM is a specialist honed in on the AWS ecosystem, as if it were a hawk guarding its nest. Azure AD? It’s the broad-visioned explorer, managing identities across Microsoft’s empire and beyond, easily reaching into third-party territories.

- Features: Both bring heavy-hitting security—multi-factor authentication is their shared superpower. But Azure AD ups the ante with conditional access policies, letting you say, “Only let them in if they’re calling from a trusted galaxy or wielding the right device.”

- Integration: AWS IAM is the loyal sidekick to AWS services, meshing seamlessly with its kin. Azure AD, though, is the extroverted networker, linking up with Microsoft 365, Azure, and a constellation of SaaS apps—think of it as the life of the cloud party.

- User Management: AWS IAM keeps it tight, handling users and roles within the AWS kingdom. Azure AD goes wide, overseeing users and groups across your entire organization—cloud, on-premises, you name it.

- Authentication and Authorization: Both are fortress-strong, but Azure AD flexes extra muscle with advanced features that adapt to the chaos of the digital cosmos.

Which reigns supreme?

Now, here comes the supernova query: Which one is better? Hold onto your hats because this isn’t a one-size-fits-all answer; it’s more like choosing between a lightsaber and a sonic screwdriver. Context is everything!

- Team AWS IAM: If your universe revolves around AWS, IAM is your trusty guide. It’s deeply woven into the AWS fabric, offering pinpoint control over your resources. It’s the master key to your AWS kingdom.

- Team Azure AD: If you’re dreaming of a broader empire, one that spans Microsoft services and a galaxy of apps, Azure AD is your universal remote. It shines brightest in Microsoft-centric worlds or when you need versatility across platforms.

Here’s a mind-blowing nugget to ponder: Azure AD keeps the gates for over 200,000 organizations worldwide. That’s like being the bouncer for every club in a sprawling, intergalactic mega-city!

The verdict (with a twist)

So, who wins this cosmic clash? AWS IAM is a champ in its domain, unrivaled for AWS loyalists. But Azure AD? It’s the disruptor, the game-changer, edging ahead with its flexibility and integration prowess. It’s not just a tool; it’s a bridge to the future of identity management.

But here’s the kicker: the “better” choice is the one that fits your orbit. Are you locked into AWS, or are you roaming the wilds of a multi-cloud universe? That’s the real question.

What’s your take, cosmic travelers? Are you Team AWS IAM, guarding the VIP lounge, or Team Azure AD, rewriting the rules of the cloud? Drop your thoughts below, I’m all ears for this interstellar debate!