If your pants feel a little tight after a large Thanksgiving meal, the logical solution is to discreetly unbutton them. You do not typically hire a hitman to assassinate yourself, clone your DNA in a vat, and grow a slightly wider version of yourself just to digest dessert. Yet, for nearly a decade, this is exactly how Kubernetes handled resource management.

When an application slowly consumed all its allocated memory, the standard orchestration response was absolute, violent destruction. We did not give the application more memory. We shot it in the head, deleted its entire existence, and span up a brand new replica with a slightly larger plate. Connections dropped. Warm caches evaporated. State was lost in the digital wind.

Thankfully, the era of the Kubernetes firing squad is drawing to a close. In-place pod resizing has officially graduated to stable General Availability in Kubernetes v1.35, and it changes the fundamental physics of how we manage workloads. We can finally stop burning down the house just to buy a bigger sofa.

Let us explore how this works, why it is practically miraculous, and how to use it without accidentally angering the Linux kernel.

The historical absurdity of pod resource management

Before in-place resizing, if you wanted to change the CPU or memory allocated to a running pod, the workflow was brutally simplistic. You updated your Deployment specification with the new resource requests and limits. The Kubernetes control plane saw the discrepancy between the desired state and the current state. The control plane then instructed the Kubelet to terminate the old pod and create a new one.

Think of taking your car to the mechanic because you need thicker tires. Instead of swapping the tires, the mechanic puts your car into an industrial crusher, hands you an identical car with thicker tires, and tells you to reprogram your radio stations. Sure, the rolling update strategy ensured you had a backup car to drive while the primary one was being crushed, but the specific pod doing the heavy lifting was gone.

For stateless microservices written in Go, this was barely an inconvenience. For a massive Java Virtual Machine holding gigabytes of cached data, or a machine learning inference service handling a traffic spike, a restart was a traumatic event. It meant minutes of downtime, CPU spikes during startup, and a slew of unhappy alerts.

Enter the era of in-place resizing

The magic of Kubernetes v1.35 is that the “resources.requests” and “resources.limits” fields within a pod specification are no longer immutable. You can edit them on the fly.

Under the hood, Kubernetes is finally taking full advantage of Linux control groups (cgroups). A container is basically just a regular Linux process trapped in a highly restrictive administrative box. The kernel has always had the ability to move the walls of this box without killing the process inside. If a container needs more memory, the kernel simply adjusts the cgroup memory limit. It is like a landlord quietly sliding a partition wall outward while you are still sleeping in your bed.

Here is a sanitized example of how you might update a running pod. Notice how we are just applying a patch to an existing, actively running resource.

# We patch the running pod to increase memory limits

# No restarts, no dropped connections, just instant gratification

kubectl patch pod bloated-legacy-api-7b89f5c -p '{"spec":{"containers":[{"name":"main-app","resources":{"limits":{"memory":"4Gi"}}}]}}'It feels almost illegal the first time you do it. The pod keeps running, the uptime counter keeps ticking, but suddenly the application has breathing room.

The bureaucratic lifecycle of asking for more RAM

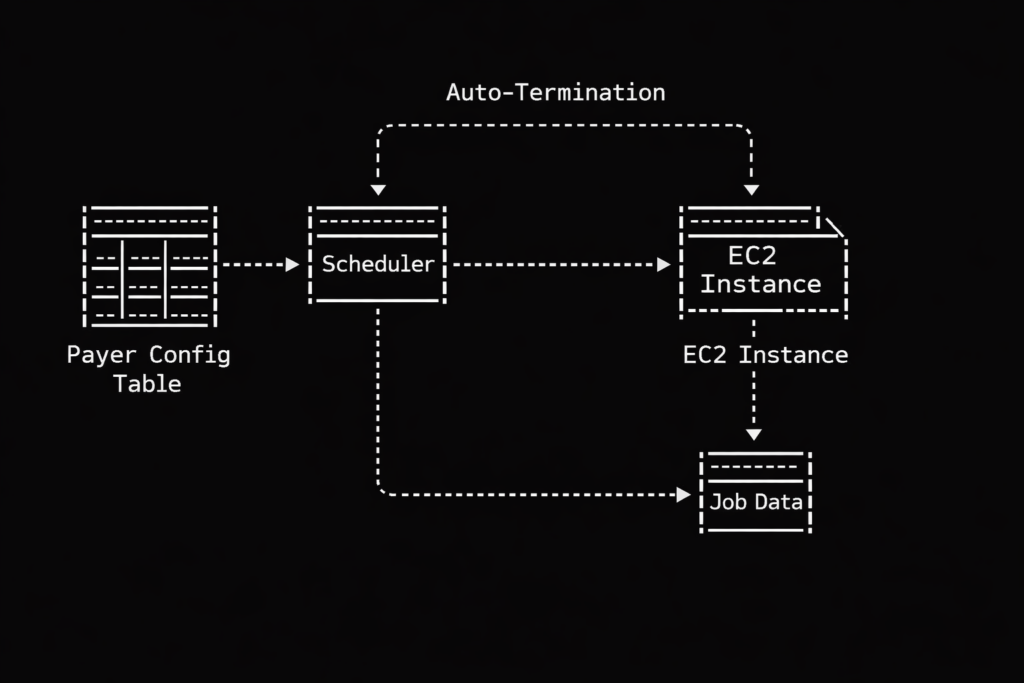

Of course, you cannot just demand more resources and expect the universe to instantly comply. The physical node hosting your pod must actually have the spare CPU or memory available. To manage this negotiation, Kubernetes introduces a new field in the pod status called resize.

This field tracks the bureaucratic process of your resource request. It is very much like dealing with the Department of Motor Vehicles, but measured in milliseconds. The statuses are surprisingly descriptive.

First, there is Proposed. This means your request for more resources has been acknowledged. The paperwork is on the desk, but nobody has stamped it yet.

Second, we have “InProgress”. The Kubelet has accepted the request and is currently asking the container runtime (like containerd or CRI-O) to adjust the cgroup limits. The walls are physically moving.

Third, you might see Deferred. This is the orchestrator politely telling you that the node is currently full. Your pod is on a waitlist. As soon as another pod terminates or frees up space, your resize request will be processed. You do not get an error, but you also do not get your RAM. You just wait.

Finally, there is Infeasible. This is Kubernetes looking at you with deep, profound disappointment. You probably asked for 64 Gigabytes of memory on a tiny virtual machine that only has 8 Gigabytes total. The API server essentially stamps your form with a big red “DENIED” and moves on with its life.

Negotiating with stubborn runtimes using resize policies

Not all applications are smart enough to realize they have been gifted more resources. Node.js or Go applications will happily consume newly available CPU cycles without being told. Java applications, on the other hand, are like stubborn mules. If you start a JVM with a maximum heap size of 2 Gigabytes, giving the container 4 Gigabytes of memory will accomplish absolutely nothing. The JVM will stubbornly refuse to look at the new memory until you reboot it.

To handle these varying levels of application intelligence, Kubernetes gives us the resizePolicy array. This allows you to define exactly how the Kubelet should handle changes for each specific resource type.

apiVersion: v1

kind: Pod

metadata:

name: stubborn-java-beast

spec:

containers:

- name: heavy-calculator

image: corporate-repo/calculator:v4

resizePolicy:

- resourceName: cpu

restartPolicy: RestartNotRequired

- resourceName: memory

restartPolicy: RestartContainer

resources:

requests:

memory: "2Gi"

cpu: "1"

limits:

memory: "4Gi"

cpu: "2"In this configuration, we are telling Kubernetes a very specific set of rules. If we patch the CPU limits, the Kubelet will adjust the cgroups and leave the container alone (RestartNotRequired). The application will just run faster. However, if we patch the memory limits, the Kubelet knows it must restart the container (RestartContainer) so the application can read the new environment variables and adjust its internal memory management.

The vertical pod autoscaler is your marital therapist

Manually patching pods in the middle of the night is still a terrible way to manage infrastructure. The true power of in-place resizing is unlocked when you pair it with the Vertical Pod Autoscaler.

Historically, the VPA was a bit of a brute. It would watch your pods, realize they needed more memory, and then mercilessly murder them to apply the new sizes. It was effective, but highly destructive.

Now, the VPA features a magical mode called InPlaceOrRecreate. Think of this mode as a highly skilled couples therapist. It sits quietly in the background, observing the relationship between your application and its memory usage. When the application needs more space to grow, the VPA simply slides the walls outward without causing a scene. It only resorts to the nuclear option of recreating the pod if an in-place resize is technically impossible.

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: smooth-scaling-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: frontend-service

updatePolicy:

# The magic word that prevents the firing squad

updateMode: "InPlaceOrRecreate"With this single setting, your stateful workloads, long-running batch jobs, and memory-hungry APIs can seamlessly adapt to traffic spikes without ever dropping a user request.

The fine print and the rottweiler problem

As with all things in distributed systems, there are rules. You cannot cheat the laws of physics. If a node is completely exhausted of resources, your in-place resize will sit in the Deferred state forever. You still need the Cluster Autoscaler to add fresh physical nodes to your cluster. In-place resizing is a tool for distributing existing wealth, not for printing new money.

Furthermore, we must talk about the danger of scaling down. Increasing memory is a joyous occasion. Reducing memory limits on a running container is like trying to take a juicy steak out of the mouth of a hungry Rottweiler.

The Linux kernel takes memory limits very seriously. If your application is currently using 3 Gigabytes of memory, and you smugly patch the pod to limit it to 2 Gigabytes, the kernel does not politely ask the application to clean up its garbage. The Out Of Memory killer wakes up, grabs an axe, and immediately slaughters your application.

Always scale down memory with extreme caution. It is almost always safer to wait for a natural deployment cycle to reduce memory requests than to try to forcefully shrink a running process.

In the end, in-place pod resizing is not just a neat party trick. It is a fundamental maturation of Kubernetes as an operating system for the cloud. We are no longer treating our workloads like disposable cattle to be slaughtered at the first sign of trouble. We are treating them like slightly demanding house pets. Just give them the bigger bowl of food and let them sleep.